The all-new 2026 n’Space P371 makes its debut

2026 n’Space P371 unlocks spatial connectivity

Pioneering a new paradigm for human collaboration, bringing the world to you

Immerse yourself in work, study, and social interactions like never before

In n’Space, every moment feels authentic and every action carries meaning

Immersive and unrestrained

Centralized Inside-Out sensor array enables full-range real-time 3D body posture computation, capturing, recognizing, and transmitting skeletal data instantly

n’Space recognizes you, understands your intent, and helps you 'meet' more people

Matrix-style audio capture system with edge AI computing capabilities

Exceptional noise reduction and echo cancellation in complex acoustic environments

When communicating with friends afar

Even if they're on the other side of the world, they'll feel right beside you

Through n’Space

Meet more people in virtual 3D space, in your world

Not just seeing and feeling, but experiencing and connecting

When Spatial Intelligence Meets Physical Portals: Redefining the Endgame of Human-Computer Interaction

At ArchiFiction, we deeply understand: the endpoint of digital transformation is not forcing humans to wear heavy shackles to enter the virtual world, but making the digital world conform to human instinct

As Stanford Professor Fei-Fei Li stated, 'Spatial Intelligence' is the next frontier of AI

n'Space 2026 provides a full-body physical portal for this capability, transforming AI from code on screens into tangible reality in physical space

Connectivity can do far more than this

2026 n’Space P371 truly enables comprehensive spatial sharing

This means not just breaking spatiotemporal barriers, but enabling users to

physically enter shared scenarios and engage in deep communication and learning from identical perspectives

The path from ideation, collaboration, verification to implementation has never been simpler

Algorithm as Moat: AI-Driven 'Scene Follows User'

The ultimate immersion of n'Space 2026 stems from deep AI geometric computing power.

To overcome projection background interference, human body occlusion, and skeletal information loss from top-view angles, we built an independent technical route for top-mounted machine vision recognition and tracking systems

The algorithm's leadership stems from data purity. Our self-built 'Walk-in Mixed Reality Space Human Pose Recognition RGB Dataset' has been selected as part of Hangzhou's first batch of high-quality datasets

Models trained on this dataset are deeply optimized specifically for n'Space's unique top-down viewing angle

The 2026 model achieves millisecond-level real-time inference through AI. The system not only locates head coordinates but also, through 2D/3D pose algorithm evolution, achieves precise extraction of skeletal key points under complex lighting conditions

This means that no matter how you turn or crouch within the space, the algorithm ensures geometrically absolute accuracy for 'scene follows user'

Integrated edge GPU computing power simultaneously drives a 5-camera depth matrix

Through multi-view trajectory correlation algorithms, the system resolves recognition consistency issues during multi-person interactions, compressing human-computer interaction latency below the perception threshold

Commercial Validation: AI-Driven Real-World Productivity

n'Space's success logic in high-end construction is continuously being validated across more industries:

- MTR Reality Lab: In highly complex rail transit operation analysis, n'Space serves as the deduction terminal for 'spatial intelligence'. AI algorithms autonomously analyze massive operational trajectory data, allowing experts to directly step into simulated platforms for real-time stress testing and spatial conflict inspection.

- Top University Virtual Simulation Centers: For education and research, n'Space allows expensive experimental equipment, microscopic particles, or complex mechanical structures to be 'placed' at 1:1 scale into physical rooms. Students can collaboratively disassemble without wearing devices, minimizing cognitive costs and greatly improving teaching efficiency.

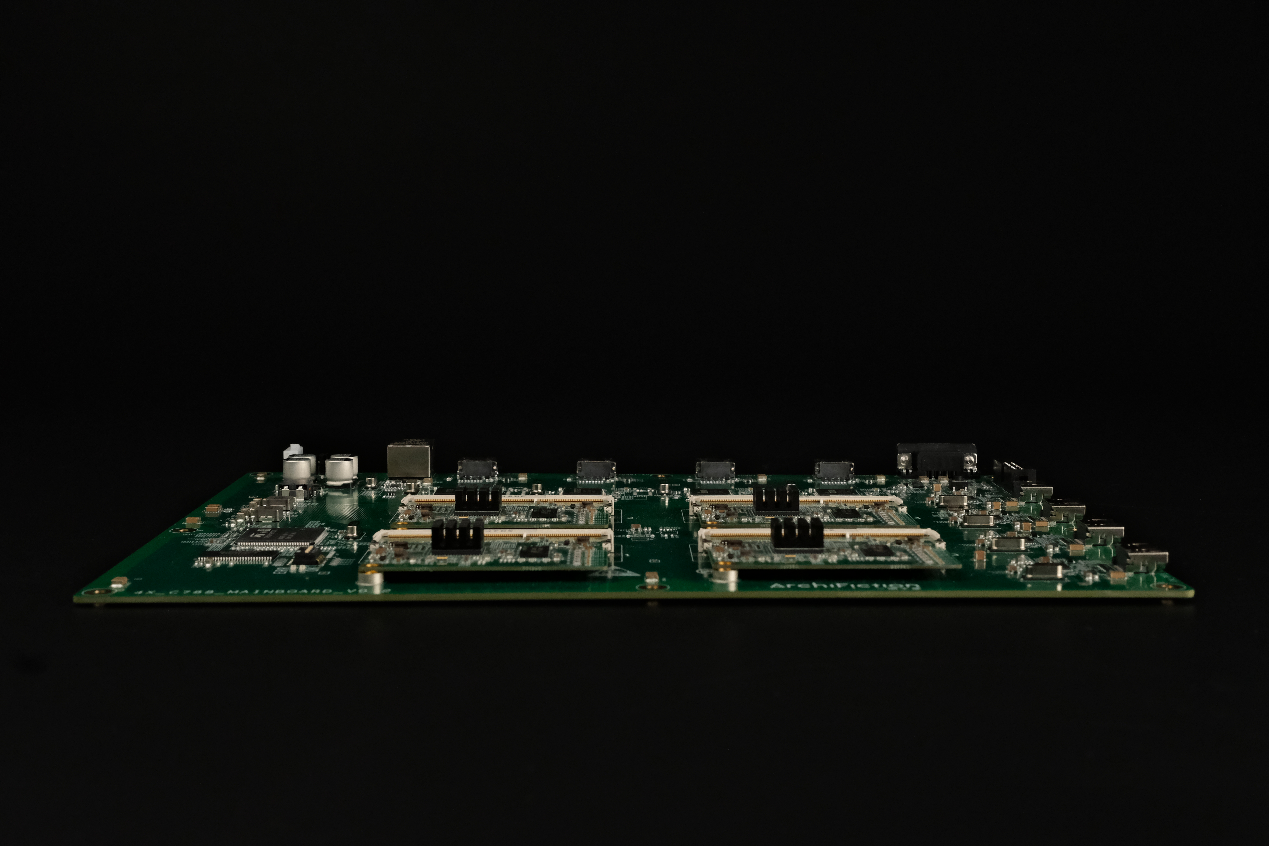

Hardware Pinnacle: Industrial-Grade Precision Physical Foundation

- Ultimate Visual Performance: Equipped with 22 custom laser modules, image quality reaches 8.5K ultra-high definition, covering 110% BT.2020 color gamut. The system natively supports real-time fusion of 360° panoramic device videos, supports SRT/RTMP low-latency live stream decoding, constructing a complete three-dimensional information loop

- Original System-Level Cooling: For high-intensity computing output, the 2026 model features an original efficient water-cooling system with temperature-adaptive algorithms, ensuring calm stability even under 7*24 hour high-load operation

Ultimate Evolution: Miniaturization and 'Calm' Industrial Aesthetics

Powerful performance no longer comes at the cost of volume; we make performance lighter and more reliable

- 35% Volume Reduction, 50% Power Consumption Drop: With 8 years of engineering accumulation, single-unit power consumption has been optimized to an astonishing 85W. This not only means lower operating costs but also enables n'Space to be more flexibly deployed in offices, control centers, and even mobile laboratories.

- Engineering Experience's Dimensional Advantage: Through three generations of product iterations, resolving tens of thousands of bugs, and conquering multi-physics field coupling challenges with multiple patents. The system supports 724365 uninterrupted operation, redefining the technical heights of 'Made in China' with hardcore engineering capabilities.

Rebuilding the Operating System of Physical Reality

n'Space 2026 is not just hardware; it is a spatial operating system integrating algorithms, hardware, and software as a trinity. It breaks the boundaries of screens, giving digital assets renewed vitality in the physical dimension

We sincerely invite you to step into this 'portal to the future' that requires no headset, and personally experience the productivity revolution brought by spatial intelligence. ArchiFiction looks forward to defining the endgame of human-computer interaction together with you